Merge branch 'master' into patch-1

This commit is contained in:

commit

bb7d8735cb

123 changed files with 5663 additions and 4379 deletions

.travis.ymlCHANGELOG.mdREADME.mdTRANSLATION

assets

css

js

config

docker-compose.ymldocker

kubernetes

locales

ar.jsonde.jsonen-US.jsoneo.jsones.jsoneu.jsonfr.jsonhu-HU.jsonit.jsonja.jsonnb-NO.jsonnl.jsonpl.jsonpt-BR.jsonpt-PT.jsonro.jsonru.jsonsr_Cyrl.jsonsv-SE.jsontr.jsonuk.jsonzh-CN.json

screenshots

shard.ymlspec

src

invidious.cr

invidious

channels.crcomments.cr

helpers

handlers.crhelpers.cri18n.crjobs.crmacros.crpatch_mapping.crsignatures.crstatic_file_handler.crtokens.crutils.cr

jobs.crjobs

base_job.crbypass_captcha_job.crnotification_job.crpull_popular_videos_job.crrefresh_channels_job.crrefresh_feeds_job.crstatistics_refresh_job.crsubscribe_to_feeds_job.crupdate_decrypt_function_job.cr

mixes.crplaylists.crroutes

routing.crsearch.crtrending.crusers.cr

21

.travis.yml

21

.travis.yml

|

|

@ -1,5 +1,20 @@

|

|||

dist: bionic

|

||||

|

||||

# Work around broken Travis Crystal image

|

||||

addons:

|

||||

apt:

|

||||

packages:

|

||||

- gcc

|

||||

- pkg-config

|

||||

- git

|

||||

- tzdata

|

||||

- libpcre3-dev

|

||||

- libevent-dev

|

||||

- libyaml-dev

|

||||

- libgmp-dev

|

||||

- libssl-dev

|

||||

- libxml2-dev

|

||||

|

||||

jobs:

|

||||

include:

|

||||

- stage: build

|

||||

|

|

@ -9,6 +24,7 @@ jobs:

|

|||

language: crystal

|

||||

crystal: latest

|

||||

before_install:

|

||||

- crystal --version

|

||||

- shards update

|

||||

- shards install

|

||||

install:

|

||||

|

|

@ -28,7 +44,4 @@ jobs:

|

|||

- docker-compose build

|

||||

script:

|

||||

- docker-compose up -d

|

||||

- sleep 15 # Wait for cluster to become ready, TODO: do not sleep

|

||||

- HEADERS="$(curl -I -s http://localhost:3000/)"

|

||||

- STATUS="$(echo $HEADERS | head -n1)"

|

||||

- if [[ "$STATUS" != *"200 OK"* ]]; then echo "$HEADERS"; exit 1; fi

|

||||

- while curl -Isf http://localhost:3000; do sleep 1; done

|

||||

|

|

|

|||

|

|

@ -400,7 +400,7 @@ An `/api/v1/stats` endpoint has been added with [#356](https://github.com/omarro

|

|||

|

||||

## For Developers

|

||||

|

||||

`/api/v1/channels/:ucid` now provides an `autoGenerated` tag, which returns true for [topic channels](https://www.youtube.com/channel/UCE80FOXpJydkkMo-BYoJdEg), and larger [genre channels](https://www.youtube.com/channel/UC-9-kyTW8ZkZNDHQJ6FgpwQ) generated by YouTube. These channels don't have any videos of their own, so `latestVideos` will be empty. It is recommended instead to display a list of playlists generated by YouTube.

|

||||

`/api/v1/channels/:ucid` now provides an `autoGenerated` tag, which returns true for topic channels, and larger genre channels generated by YouTube. These channels don't have any videos of their own, so `latestVideos` will be empty. It is recommended instead to display a list of playlists generated by YouTube.

|

||||

|

||||

You can now pull a list of playlists from a channel with `/api/v1/channels/playlists/:ucid`. Supported options are documented in the [wiki](https://github.com/omarroth/invidious/wiki/API#get-apiv1channelsplaylistsucid-apiv1channelsucidplaylists). Pagination is handled with a `continuation` token, which is generated on each call. Of note is that auto-generated channels currently have one page of results, and subsequent calls will be empty.

|

||||

|

||||

|

|

|

|||

167

README.md

167

README.md

|

|

@ -1,14 +1,18 @@

|

|||

# Invidious

|

||||

|

||||

[](https://travis-ci.org/omarroth/invidious)

|

||||

[](https://travis-ci.org/github/iv-org/invidious) [](https://hosted.weblate.org/engage/invidious/)

|

||||

|

||||

## Invidious is an alternative front-end to YouTube

|

||||

|

||||

## Invidious instances:

|

||||

|

||||

[Public Invidious instances are listed here.](https://github.com/iv-org/invidious/wiki/Invidious-Instances)

|

||||

|

||||

## Invidious features:

|

||||

|

||||

- [Copylefted libre software](https://github.com/iv-org/invidious) (AGPLv3+ licensed)

|

||||

- Audio-only mode (and no need to keep window open on mobile)

|

||||

- [Free software](https://github.com/omarroth/invidious) (AGPLv3 licensed)

|

||||

- No ads

|

||||

- No need to create a Google account to save subscriptions

|

||||

- Lightweight (homepage is ~4 KB compressed)

|

||||

- Lightweight (the homepage is ~4 KB compressed)

|

||||

- Tools for managing subscriptions:

|

||||

- Only show unseen videos

|

||||

- Only show latest (or latest unseen) video from each channel

|

||||

|

|

@ -18,37 +22,33 @@

|

|||

- Dark mode

|

||||

- Embed support

|

||||

- Set default player options (speed, quality, autoplay, loop)

|

||||

- Does not require JS to play videos

|

||||

- Support for Reddit comments in place of YT comments

|

||||

- Support for Reddit comments in place of YouTube comments

|

||||

- Import/Export subscriptions, watch history, preferences

|

||||

- [Developer API](https://github.com/iv-org/invidious/wiki/API)

|

||||

- Does not use any of the official YouTube APIs

|

||||

- Developer [API](https://github.com/omarroth/invidious/wiki/API)

|

||||

- Does not require JavaScript to play videos

|

||||

- No need to create a Google account to save subscriptions

|

||||

- No ads

|

||||

- No CoC

|

||||

- No CLA

|

||||

- [Multilingual](https://hosted.weblate.org/projects/invidious/#languages) (translated into many languages)

|

||||

|

||||

Liberapay: https://liberapay.com/omarroth

|

||||

BTC: 356DpZyMXu6rYd55Yqzjs29n79kGKWcYrY

|

||||

BCH: qq4ptclkzej5eza6a50et5ggc58hxsq5aylqut2npk

|

||||

|

||||

## Invidious Instances

|

||||

|

||||

See [Invidious Instances](https://github.com/omarroth/invidious/wiki/Invidious-Instances) for a full list of publicly available instances.

|

||||

|

||||

### Official Instances

|

||||

|

||||

- [invidio.us](https://invidio.us) 🇺🇸

|

||||

Issuer: Let's Encrypt, [SSLLabs Verification](https://www.ssllabs.com/ssltest/analyze.html?d=invidio.us)

|

||||

- [kgg2m7yk5aybusll.onion](http://kgg2m7yk5aybusll.onion)

|

||||

- [axqzx4s6s54s32yentfqojs3x5i7faxza6xo3ehd4bzzsg2ii4fv2iid.onion](http://axqzx4s6s54s32yentfqojs3x5i7faxza6xo3ehd4bzzsg2ii4fv2iid.onion)

|

||||

|

||||

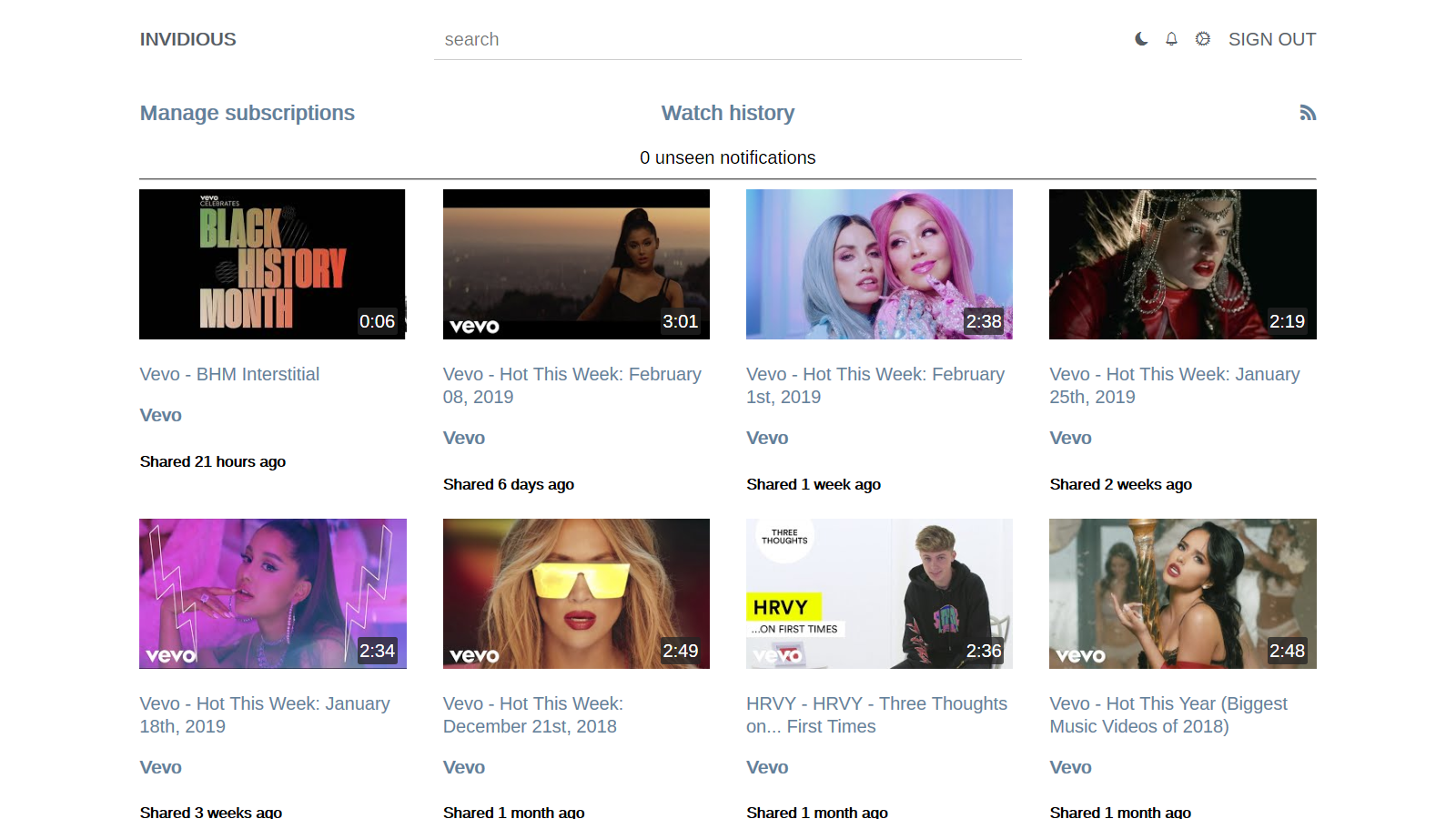

## Screenshots

|

||||

## Screenshots:

|

||||

|

||||

| Player | Preferences | Subscriptions |

|

||||

| ----------------------------------------------------------------------------------------------------------------------- | ----------------------------------------------------------------------------------------------------------------------- | --------------------------------------------------------------------------------------------------------------------------- |

|

||||

| [<img src="screenshots/01_player.png?raw=true" height="140" width="280">](screenshots/01_player.png?raw=true) | [<img src="screenshots/02_preferences.png?raw=true" height="140" width="280">](screenshots/02_preferences.png?raw=true) | [<img src="screenshots/03_subscriptions.png?raw=true" height="140" width="280">](screenshots/03_subscriptions.png?raw=true) |

|

||||

| [<img src="screenshots/04_description.png?raw=true" height="140" width="280">](screenshots/04_description.png?raw=true) | [<img src="screenshots/05_preferences.png?raw=true" height="140" width="280">](screenshots/05_preferences.png?raw=true) | [<img src="screenshots/06_subscriptions.png?raw=true" height="140" width="280">](screenshots/06_subscriptions.png?raw=true) |

|

||||

|

||||

## Installation

|

||||

## Installation:

|

||||

|

||||

See [Invidious-Updater](https://github.com/tmiland/Invidious-Updater) for a self-contained script that can automatically install and update Invidious.

|

||||

To manually compile invidious you need at least 2GB of RAM. If you have less you can setup SWAP to have a combined amount of 2 GB or use Docker instead.

|

||||

|

||||

After installation take a look at the [Post-install steps](#post-install-configuration).

|

||||

|

||||

### Automated installation:

|

||||

|

||||

[Invidious-Updater](https://github.com/tmiland/Invidious-Updater) is a self-contained script that can automatically install and update Invidious.

|

||||

|

||||

### Docker:

|

||||

|

||||

|

|

@ -58,7 +58,7 @@ See [Invidious-Updater](https://github.com/tmiland/Invidious-Updater) for a self

|

|||

$ docker-compose up

|

||||

```

|

||||

|

||||

And visit `localhost:3000` in your browser.

|

||||

Then visit `localhost:3000` in your browser.

|

||||

|

||||

#### Rebuild cluster:

|

||||

|

||||

|

|

@ -73,9 +73,11 @@ $ docker volume rm invidious_postgresdata

|

|||

$ docker-compose build

|

||||

```

|

||||

|

||||

### Manual installation:

|

||||

|

||||

### Linux:

|

||||

|

||||

#### Install dependencies

|

||||

#### Install the dependencies

|

||||

|

||||

```bash

|

||||

# Arch Linux

|

||||

|

|

@ -88,23 +90,22 @@ $ curl -sSL https://dist.crystal-lang.org/apt/setup.sh | sudo bash

|

|||

$ curl -sL "https://keybase.io/crystal/pgp_keys.asc" | sudo apt-key add -

|

||||

$ echo "deb https://dist.crystal-lang.org/apt crystal main" | sudo tee /etc/apt/sources.list.d/crystal.list

|

||||

$ sudo apt-get update

|

||||

$ sudo apt install crystal libssl-dev libxml2-dev libyaml-dev libgmp-dev libreadline-dev postgresql librsvg2-bin libsqlite3-dev

|

||||

$ sudo apt install crystal libssl-dev libxml2-dev libyaml-dev libgmp-dev libreadline-dev postgresql librsvg2-bin libsqlite3-dev zlib1g-dev

|

||||

```

|

||||

|

||||

#### Add invidious user and clone repository

|

||||

#### Add an Invidious user and clone the repository

|

||||

|

||||

```bash

|

||||

$ useradd -m invidious

|

||||

$ sudo -i -u invidious

|

||||

$ git clone https://github.com/omarroth/invidious

|

||||

$ git clone https://github.com/iv-org/invidious

|

||||

$ exit

|

||||

```

|

||||

|

||||

#### Setup PostgresSQL

|

||||

#### Set up PostgresSQL

|

||||

|

||||

```bash

|

||||

$ sudo systemctl enable postgresql

|

||||

$ sudo systemctl start postgresql

|

||||

$ sudo systemctl enable --now postgresql

|

||||

$ sudo -i -u postgres

|

||||

$ psql -c "CREATE USER kemal WITH PASSWORD 'kemal';" # Change 'kemal' here to a stronger password, and update `password` in config/config.yml

|

||||

$ createdb -O kemal invidious

|

||||

|

|

@ -115,10 +116,12 @@ $ psql invidious kemal < /home/invidious/invidious/config/sql/users.sql

|

|||

$ psql invidious kemal < /home/invidious/invidious/config/sql/session_ids.sql

|

||||

$ psql invidious kemal < /home/invidious/invidious/config/sql/nonces.sql

|

||||

$ psql invidious kemal < /home/invidious/invidious/config/sql/annotations.sql

|

||||

$ psql invidious kemal < /home/invidious/invidious/config/sql/playlists.sql

|

||||

$ psql invidious kemal < /home/invidious/invidious/config/sql/playlist_videos.sql

|

||||

$ exit

|

||||

```

|

||||

|

||||

#### Setup Invidious

|

||||

#### Set up Invidious

|

||||

|

||||

```bash

|

||||

$ sudo -i -u invidious

|

||||

|

|

@ -130,23 +133,36 @@ $ ./invidious # stop with ctrl c

|

|||

$ exit

|

||||

```

|

||||

|

||||

#### systemd service

|

||||

#### Systemd service:

|

||||

|

||||

```bash

|

||||

$ sudo cp /home/invidious/invidious/invidious.service /etc/systemd/system/invidious.service

|

||||

$ sudo systemctl enable invidious.service

|

||||

$ sudo systemctl start invidious.service

|

||||

$ sudo systemctl enable --now invidious.service

|

||||

```

|

||||

|

||||

### OSX:

|

||||

#### Logrotate:

|

||||

|

||||

```bash

|

||||

$ sudo echo "/home/invidious/invidious/invidious.log {

|

||||

rotate 4

|

||||

weekly

|

||||

notifempty

|

||||

missingok

|

||||

compress

|

||||

minsize 1048576

|

||||

}" | tee /etc/logrotate.d/invidious.logrotate

|

||||

$ sudo chmod 0644 /etc/logrotate.d/invidious.logrotate

|

||||

```

|

||||

|

||||

### MacOS:

|

||||

|

||||

```bash

|

||||

# Install dependencies

|

||||

$ brew update

|

||||

$ brew install shards crystal postgres imagemagick librsvg

|

||||

|

||||

# Clone repository and setup postgres database

|

||||

$ git clone https://github.com/omarroth/invidious

|

||||

# Clone the repository and set up a PostgreSQL database

|

||||

$ git clone https://github.com/iv-org/invidious

|

||||

$ cd invidious

|

||||

$ brew services start postgresql

|

||||

$ psql -c "CREATE ROLE kemal WITH PASSWORD 'kemal';" # Change 'kemal' here to a stronger password, and update `password` in config/config.yml

|

||||

|

|

@ -158,15 +174,30 @@ $ psql invidious kemal < config/sql/users.sql

|

|||

$ psql invidious kemal < config/sql/session_ids.sql

|

||||

$ psql invidious kemal < config/sql/nonces.sql

|

||||

$ psql invidious kemal < config/sql/annotations.sql

|

||||

$ psql invidious kemal < config/sql/privacy.sql

|

||||

$ psql invidious kemal < config/sql/playlists.sql

|

||||

$ psql invidious kemal < config/sql/playlist_videos.sql

|

||||

|

||||

# Setup Invidious

|

||||

# Set up Invidious

|

||||

$ shards update && shards install

|

||||

$ crystal build src/invidious.cr --release

|

||||

```

|

||||

|

||||

## Post-install configuration:

|

||||

|

||||

Detailed configuration available in the [configuration guide](https://github.com/iv-org/invidious/wiki/Configuration).

|

||||

|

||||

If you use a reverse proxy, you **must** configure invidious to properly serve request through it:

|

||||

|

||||

`https_only: true` : if your are serving your instance via https, set it to true

|

||||

|

||||

`domain: domain.ext`: if you are serving your instance via a domain name, set it here

|

||||

|

||||

`external_port: 443`: if your are serving your instance via https, set it to 443

|

||||

|

||||

## Update Invidious

|

||||

|

||||

You can see how to update Invidious [here](https://github.com/omarroth/invidious/wiki/Updating).

|

||||

Instructions are available in the [updating guide](https://github.com/iv-org/invidious/wiki/Updating).

|

||||

|

||||

## Usage:

|

||||

|

||||

|

|

@ -197,39 +228,55 @@ $ ./sentry

|

|||

|

||||

## Documentation

|

||||

|

||||

[Documentation](https://github.com/omarroth/invidious/wiki) can be found in the wiki.

|

||||

[Documentation](https://github.com/iv-org/invidious/wiki) can be found in the wiki.

|

||||

|

||||

## Extensions

|

||||

|

||||

[Extensions](https://github.com/omarroth/invidious/wiki/Extensions) can be found in the wiki, as well as documentation for integrating it into other projects.

|

||||

[Extensions](https://github.com/iv-org/invidious/wiki/Extensions) can be found in the wiki, as well as documentation for integrating it into other projects.

|

||||

|

||||

## Made with Invidious

|

||||

|

||||

- [FreeTube](https://github.com/FreeTubeApp/FreeTube): An Open Source YouTube app for privacy.

|

||||

- [CloudTube](https://cadence.moe/cloudtube/subscriptions): A JS-rich alternate YouTube player

|

||||

- [PeerTubeify](https://gitlab.com/Ealhad/peertubeify): On YouTube, displays a link to the same video on PeerTube, if it exists.

|

||||

- [MusicPiped](https://github.com/deep-gaurav/MusicPiped): A materialistic music player that streams music from YouTube.

|

||||

- [FreeTube](https://github.com/FreeTubeApp/FreeTube): A libre software YouTube app for privacy.

|

||||

- [CloudTube](https://cadence.moe/cloudtube/subscriptions): A JavaScript-rich alternate YouTube player

|

||||

- [PeerTubeify](https://gitlab.com/Cha_deL/peertubeify): On YouTube, displays a link to the same video on PeerTube, if it exists.

|

||||

- [MusicPiped](https://github.com/deep-gaurav/MusicPiped): A material design music player that streams music from YouTube.

|

||||

- [LapisTube](https://github.com/blubbll/lapis-tube): A fancy and advanced (experimental) YouTube front-end. Combined streams & custom YT features.

|

||||

- [HoloPlay](https://github.com/stephane-r/HoloPlay): Funny Android application connecting on Invidious API's with search, playlists and favoris.

|

||||

|

||||

## Contributing

|

||||

|

||||

1. Fork it ( https://github.com/omarroth/invidious/fork )

|

||||

1. Fork it ( https://github.com/iv-org/invidious/fork )

|

||||

2. Create your feature branch (git checkout -b my-new-feature)

|

||||

3. Commit your changes (git commit -am 'Add some feature')

|

||||

4. Push to the branch (git push origin my-new-feature)

|

||||

5. Create a new Pull Request

|

||||

5. Create a new pull request

|

||||

|

||||

#### Translation

|

||||

|

||||

- Log in with an account you have elsewhere, or register an account and start translating at [Hosted Weblate](https://hosted.weblate.org/engage/invidious/).

|

||||

|

||||

## Donate:

|

||||

|

||||

Liberapay: https://liberapay.com/iv-org/

|

||||

|

||||

## Contact

|

||||

|

||||

Feel free to send an email to omarroth@protonmail.com or join our [Matrix Server](https://riot.im/app/#/room/#invidious:matrix.org), or #invidious on Freenode.

|

||||

Feel free to join our [Matrix room](https://matrix.to/#/#invidious:matrix.org), or #invidious on freenode. Both platforms are bridged together.

|

||||

|

||||

You can also view release notes on the [releases](https://github.com/omarroth/invidious/releases) page or in the CHANGELOG.md included in the repository.

|

||||

## Liability

|

||||

|

||||

## License

|

||||

We take no responsibility for the use of our tool, or external instances provided by third parties. We strongly recommend you abide by the valid official regulations in your country. Furthermore, we refuse liability for any inappropriate use of Invidious, such as illegal downloading. This tool is provided to you in the spirit of free, open software.

|

||||

|

||||

[](http://www.gnu.org/licenses/agpl-3.0.en.html)

|

||||

You may view the LICENSE in which this software is provided to you [here](./LICENSE).

|

||||

|

||||

Invidious is Free Software: You can use, study share and improve it at your

|

||||

will. Specifically you can redistribute and/or modify it under the terms of the

|

||||

[GNU Affero General Public License](https://www.gnu.org/licenses/agpl.html) as

|

||||

published by the Free Software Foundation, either version 3 of the License, or

|

||||

(at your option) any later version.

|

||||

> 16. Limitation of Liability.

|

||||

>

|

||||

> IN NO EVENT UNLESS REQUIRED BY APPLICABLE LAW OR AGREED TO IN WRITING

|

||||

WILL ANY COPYRIGHT HOLDER, OR ANY OTHER PARTY WHO MODIFIES AND/OR CONVEYS

|

||||

THE PROGRAM AS PERMITTED ABOVE, BE LIABLE TO YOU FOR DAMAGES, INCLUDING ANY

|

||||

GENERAL, SPECIAL, INCIDENTAL OR CONSEQUENTIAL DAMAGES ARISING OUT OF THE

|

||||

USE OR INABILITY TO USE THE PROGRAM (INCLUDING BUT NOT LIMITED TO LOSS OF

|

||||

DATA OR DATA BEING RENDERED INACCURATE OR LOSSES SUSTAINED BY YOU OR THIRD

|

||||

PARTIES OR A FAILURE OF THE PROGRAM TO OPERATE WITH ANY OTHER PROGRAMS),

|

||||

EVEN IF SUCH HOLDER OR OTHER PARTY HAS BEEN ADVISED OF THE POSSIBILITY OF

|

||||

SUCH DAMAGES.

|

||||

|

|

|

|||

1

TRANSLATION

Normal file

1

TRANSLATION

Normal file

|

|

@ -0,0 +1 @@

|

|||

https://hosted.weblate.org/projects/invidious/

|

||||

|

|

@ -60,6 +60,22 @@ body {

|

|||

color: rgb(255, 0, 0);

|

||||

}

|

||||

|

||||

.feed-menu {

|

||||

display: flex;

|

||||

justify-content: center;

|

||||

flex-wrap: wrap;

|

||||

}

|

||||

|

||||

.feed-menu-item {

|

||||

text-align: center;

|

||||

}

|

||||

|

||||

@media screen and (max-width: 640px) {

|

||||

.feed-menu-item {

|

||||

flex: 0 0 40%;

|

||||

}

|

||||

}

|

||||

|

||||

.h-box {

|

||||

padding-left: 1em;

|

||||

padding-right: 1em;

|

||||

|

|

|

|||

10

assets/css/embed.css

Normal file

10

assets/css/embed.css

Normal file

|

|

@ -0,0 +1,10 @@

|

|||

#player {

|

||||

position: fixed;

|

||||

right: 0;

|

||||

bottom: 0;

|

||||

min-width: 100%;

|

||||

min-height: 100%;

|

||||

width: auto;

|

||||

height: auto;

|

||||

z-index: -100;

|

||||

}

|

||||

3

assets/css/videojs-vtt-thumbnails-fix.css

Normal file

3

assets/css/videojs-vtt-thumbnails-fix.css

Normal file

|

|

@ -0,0 +1,3 @@

|

|||

.video-js .vjs-vtt-thumbnail-display {

|

||||

max-width: 158px;

|

||||

}

|

||||

|

|

@ -1,3 +1,5 @@

|

|||

var community_data = JSON.parse(document.getElementById('community_data').innerHTML);

|

||||

|

||||

String.prototype.supplant = function (o) {

|

||||

return this.replace(/{([^{}]*)}/g, function (a, b) {

|

||||

var r = o[b];

|

||||

|

|

|

|||

|

|

@ -1,3 +1,5 @@

|

|||

var video_data = JSON.parse(document.getElementById('video_data').innerHTML);

|

||||

|

||||

function get_playlist(plid, retries) {

|

||||

if (retries == undefined) retries = 5;

|

||||

|

||||

|

|

|

|||

3

assets/js/global.js

Normal file

3

assets/js/global.js

Normal file

|

|

@ -0,0 +1,3 @@

|

|||

// Disable Web Workers. Fixes Video.js CSP violation (created by `new Worker(objURL)`):

|

||||

// Refused to create a worker from 'blob:http://host/id' because it violates the following Content Security Policy directive: "worker-src 'self'".

|

||||

window.Worker = undefined;

|

||||

144

assets/js/handlers.js

Normal file

144

assets/js/handlers.js

Normal file

|

|

@ -0,0 +1,144 @@

|

|||

'use strict';

|

||||

|

||||

(function () {

|

||||

var n2a = function (n) { return Array.prototype.slice.call(n); };

|

||||

|

||||

var video_player = document.getElementById('player_html5_api');

|

||||

if (video_player) {

|

||||

video_player.onmouseenter = function () { video_player['data-title'] = video_player['title']; video_player['title'] = ''; };

|

||||

video_player.onmouseleave = function () { video_player['title'] = video_player['data-title']; video_player['data-title'] = ''; };

|

||||

video_player.oncontextmenu = function () { video_player['title'] = video_player['data-title']; };

|

||||

}

|

||||

|

||||

// For dynamically inserted elements

|

||||

document.addEventListener('click', function (e) {

|

||||

if (!e || !e.target) { return; }

|

||||

e = e.target;

|

||||

var handler_name = e.getAttribute('data-onclick');

|

||||

switch (handler_name) {

|

||||

case 'jump_to_time':

|

||||

var time = e.getAttribute('data-jump-time');

|

||||

player.currentTime(time);

|

||||

break;

|

||||

case 'get_youtube_replies':

|

||||

var load_more = e.getAttribute('data-load-more') !== null;

|

||||

get_youtube_replies(e, load_more);

|

||||

break;

|

||||

case 'toggle_parent':

|

||||

toggle_parent(e);

|

||||

break;

|

||||

default:

|

||||

break;

|

||||

}

|

||||

});

|

||||

|

||||

n2a(document.querySelectorAll('[data-mouse="switch_classes"]')).forEach(function (e) {

|

||||

var classes = e.getAttribute('data-switch-classes').split(',');

|

||||

var ec = classes[0];

|

||||

var lc = classes[1];

|

||||

var onoff = function (on, off) {

|

||||

var cs = e.getAttribute('class');

|

||||

cs = cs.split(off).join(on);

|

||||

e.setAttribute('class', cs);

|

||||

};

|

||||

e.onmouseenter = function () { onoff(ec, lc); };

|

||||

e.onmouseleave = function () { onoff(lc, ec); };

|

||||

});

|

||||

|

||||

n2a(document.querySelectorAll('[data-onsubmit="return_false"]')).forEach(function (e) {

|

||||

e.onsubmit = function () { return false; };

|

||||

});

|

||||

|

||||

n2a(document.querySelectorAll('[data-onclick="mark_watched"]')).forEach(function (e) {

|

||||

e.onclick = function () { mark_watched(e); };

|

||||

});

|

||||

n2a(document.querySelectorAll('[data-onclick="mark_unwatched"]')).forEach(function (e) {

|

||||

e.onclick = function () { mark_unwatched(e); };

|

||||

});

|

||||

n2a(document.querySelectorAll('[data-onclick="add_playlist_video"]')).forEach(function (e) {

|

||||

e.onclick = function () { add_playlist_video(e); };

|

||||

});

|

||||

n2a(document.querySelectorAll('[data-onclick="add_playlist_item"]')).forEach(function (e) {

|

||||

e.onclick = function () { add_playlist_item(e); };

|

||||

});

|

||||

n2a(document.querySelectorAll('[data-onclick="remove_playlist_item"]')).forEach(function (e) {

|

||||

e.onclick = function () { remove_playlist_item(e); };

|

||||

});

|

||||

n2a(document.querySelectorAll('[data-onclick="revoke_token"]')).forEach(function (e) {

|

||||

e.onclick = function () { revoke_token(e); };

|

||||

});

|

||||

n2a(document.querySelectorAll('[data-onclick="remove_subscription"]')).forEach(function (e) {

|

||||

e.onclick = function () { remove_subscription(e); };

|

||||

});

|

||||

n2a(document.querySelectorAll('[data-onclick="notification_requestPermission"]')).forEach(function (e) {

|

||||

e.onclick = function () { Notification.requestPermission(); };

|

||||

});

|

||||

|

||||

n2a(document.querySelectorAll('[data-onrange="update_volume_value"]')).forEach(function (e) {

|

||||

var cb = function () { update_volume_value(e); }

|

||||

e.oninput = cb;

|

||||

e.onchange = cb;

|

||||

});

|

||||

|

||||

function update_volume_value(element) {

|

||||

document.getElementById('volume-value').innerText = element.value;

|

||||

}

|

||||

|

||||

function revoke_token(target) {

|

||||

var row = target.parentNode.parentNode.parentNode.parentNode.parentNode;

|

||||

row.style.display = 'none';

|

||||

var count = document.getElementById('count');

|

||||

count.innerText = count.innerText - 1;

|

||||

|

||||

var referer = window.encodeURIComponent(document.location.href);

|

||||

var url = '/token_ajax?action_revoke_token=1&redirect=false' +

|

||||

'&referer=' + referer +

|

||||

'&session=' + target.getAttribute('data-session');

|

||||

var xhr = new XMLHttpRequest();

|

||||

xhr.responseType = 'json';

|

||||

xhr.timeout = 10000;

|

||||

xhr.open('POST', url, true);

|

||||

xhr.setRequestHeader('Content-Type', 'application/x-www-form-urlencoded');

|

||||

|

||||

xhr.onreadystatechange = function () {

|

||||

if (xhr.readyState == 4) {

|

||||

if (xhr.status != 200) {

|

||||

count.innerText = parseInt(count.innerText) + 1;

|

||||

row.style.display = '';

|

||||

}

|

||||

}

|

||||

}

|

||||

|

||||

var csrf_token = target.parentNode.querySelector('input[name="csrf_token"]').value;

|

||||

xhr.send('csrf_token=' + csrf_token);

|

||||

}

|

||||

|

||||

function remove_subscription(target) {

|

||||

var row = target.parentNode.parentNode.parentNode.parentNode.parentNode;

|

||||

row.style.display = 'none';

|

||||

var count = document.getElementById('count');

|

||||

count.innerText = count.innerText - 1;

|

||||

|

||||

var referer = window.encodeURIComponent(document.location.href);

|

||||

var url = '/subscription_ajax?action_remove_subscriptions=1&redirect=false' +

|

||||

'&referer=' + referer +

|

||||

'&c=' + target.getAttribute('data-ucid');

|

||||

var xhr = new XMLHttpRequest();

|

||||

xhr.responseType = 'json';

|

||||

xhr.timeout = 10000;

|

||||

xhr.open('POST', url, true);

|

||||

xhr.setRequestHeader('Content-Type', 'application/x-www-form-urlencoded');

|

||||

|

||||

xhr.onreadystatechange = function () {

|

||||

if (xhr.readyState == 4) {

|

||||

if (xhr.status != 200) {

|

||||

count.innerText = parseInt(count.innerText) + 1;

|

||||

row.style.display = '';

|

||||

}

|

||||

}

|

||||

}

|

||||

|

||||

var csrf_token = target.parentNode.querySelector('input[name="csrf_token"]').value;

|

||||

xhr.send('csrf_token=' + csrf_token);

|

||||

}

|

||||

})();

|

||||

|

|

@ -1,3 +1,5 @@

|

|||

var notification_data = JSON.parse(document.getElementById('notification_data').innerHTML);

|

||||

|

||||

var notifications, delivered;

|

||||

|

||||

function get_subscriptions(callback, retries) {

|

||||

|

|

|

|||

|

|

@ -1,3 +1,6 @@

|

|||

var player_data = JSON.parse(document.getElementById('player_data').innerHTML);

|

||||

var video_data = JSON.parse(document.getElementById('video_data').innerHTML);

|

||||

|

||||

var options = {

|

||||

preload: 'auto',

|

||||

liveui: true,

|

||||

|

|

@ -35,7 +38,7 @@ var shareOptions = {

|

|||

title: player_data.title,

|

||||

description: player_data.description,

|

||||

image: player_data.thumbnail,

|

||||

embedCode: "<iframe id='ivplayer' type='text/html' width='640' height='360' src='" + embed_url + "' frameborder='0'></iframe>"

|

||||

embedCode: "<iframe id='ivplayer' width='640' height='360' src='" + embed_url + "' style='border:none;'></iframe>"

|

||||

}

|

||||

|

||||

var player = videojs('player', options);

|

||||

|

|

@ -146,7 +149,8 @@ if (!video_data.params.listen && video_data.params.quality === 'dash') {

|

|||

}

|

||||

|

||||

player.vttThumbnails({

|

||||

src: location.origin + '/api/v1/storyboards/' + video_data.id + '?height=90'

|

||||

src: location.origin + '/api/v1/storyboards/' + video_data.id + '?height=90',

|

||||

showTimestamp: true

|

||||

});

|

||||

|

||||

// Enable annotations

|

||||

|

|

@ -228,11 +232,24 @@ function set_time_percent(percent) {

|

|||

player.currentTime(newTime);

|

||||

}

|

||||

|

||||

function play() {

|

||||

player.play();

|

||||

}

|

||||

|

||||

function pause() {

|

||||

player.pause();

|

||||

}

|

||||

|

||||

function stop() {

|

||||

player.pause();

|

||||

player.currentTime(0);

|

||||

}

|

||||

|

||||

function toggle_play() {

|

||||

if (player.paused()) {

|

||||

player.play();

|

||||

play();

|

||||

} else {

|

||||

player.pause();

|

||||

pause();

|

||||

}

|

||||

}

|

||||

|

||||

|

|

@ -338,9 +355,22 @@ window.addEventListener('keydown', e => {

|

|||

switch (decoratedKey) {

|

||||

case ' ':

|

||||

case 'k':

|

||||

case 'MediaPlayPause':

|

||||

action = toggle_play;

|

||||

break;

|

||||

|

||||

case 'MediaPlay':

|

||||

action = play;

|

||||

break;

|

||||

|

||||

case 'MediaPause':

|

||||

action = pause;

|

||||

break;

|

||||

|

||||

case 'MediaStop':

|

||||

action = stop;

|

||||

break;

|

||||

|

||||

case 'ArrowUp':

|

||||

if (isPlayerFocused) {

|

||||

action = increase_volume.bind(this, 0.1);

|

||||

|

|

@ -357,9 +387,11 @@ window.addEventListener('keydown', e => {

|

|||

break;

|

||||

|

||||

case 'ArrowRight':

|

||||

case 'MediaFastForward':

|

||||

action = skip_seconds.bind(this, 5);

|

||||

break;

|

||||

case 'ArrowLeft':

|

||||

case 'MediaTrackPrevious':

|

||||

action = skip_seconds.bind(this, -5);

|

||||

break;

|

||||

case 'l':

|

||||

|

|

@ -391,9 +423,11 @@ window.addEventListener('keydown', e => {

|

|||

break;

|

||||

|

||||

case 'N':

|

||||

case 'MediaTrackNext':

|

||||

action = next_video;

|

||||

break;

|

||||

case 'P':

|

||||

case 'MediaTrackPrevious':

|

||||

// TODO: Add support to play back previous video.

|

||||

break;

|

||||

|

||||

|

|

|

|||

|

|

@ -1,3 +1,29 @@

|

|||

var playlist_data = JSON.parse(document.getElementById('playlist_data').innerHTML);

|

||||

|

||||

function add_playlist_video(target) {

|

||||

var select = target.parentNode.children[0].children[1];

|

||||

var option = select.children[select.selectedIndex];

|

||||

|

||||

var url = '/playlist_ajax?action_add_video=1&redirect=false' +

|

||||

'&video_id=' + target.getAttribute('data-id') +

|

||||

'&playlist_id=' + option.getAttribute('data-plid');

|

||||

var xhr = new XMLHttpRequest();

|

||||

xhr.responseType = 'json';

|

||||

xhr.timeout = 10000;

|

||||

xhr.open('POST', url, true);

|

||||

xhr.setRequestHeader('Content-Type', 'application/x-www-form-urlencoded');

|

||||

|

||||

xhr.onreadystatechange = function () {

|

||||

if (xhr.readyState == 4) {

|

||||

if (xhr.status == 200) {

|

||||

option.innerText = '✓' + option.innerText;

|

||||

}

|

||||

}

|

||||

}

|

||||

|

||||

xhr.send('csrf_token=' + playlist_data.csrf_token);

|

||||

}

|

||||

|

||||

function add_playlist_item(target) {

|

||||

var tile = target.parentNode.parentNode.parentNode.parentNode.parentNode;

|

||||

tile.style.display = 'none';

|

||||

|

|

|

|||

File diff suppressed because one or more lines are too long

|

|

@ -1,3 +1,5 @@

|

|||

var subscribe_data = JSON.parse(document.getElementById('subscribe_data').innerHTML);

|

||||

|

||||

var subscribe_button = document.getElementById('subscribe');

|

||||

subscribe_button.parentNode['action'] = 'javascript:void(0)';

|

||||

|

||||

|

|

|

|||

|

|

@ -28,6 +28,27 @@ window.addEventListener('load', function () {

|

|||

update_mode(window.localStorage.dark_mode);

|

||||

});

|

||||

|

||||

|

||||

var darkScheme = window.matchMedia('(prefers-color-scheme: dark)');

|

||||

var lightScheme = window.matchMedia('(prefers-color-scheme: light)');

|

||||

|

||||

darkScheme.addListener(scheme_switch);

|

||||

lightScheme.addListener(scheme_switch);

|

||||

|

||||

function scheme_switch (e) {

|

||||

// ignore this method if we have a preference set

|

||||

if (localStorage.getItem('dark_mode')) {

|

||||

return;

|

||||

}

|

||||

if (e.matches) {

|

||||

if (e.media.includes("dark")) {

|

||||

set_mode(true);

|

||||

} else if (e.media.includes("light")) {

|

||||

set_mode(false);

|

||||

}

|

||||

}

|

||||

}

|

||||

|

||||

function set_mode (bool) {

|

||||

document.getElementById('dark_theme').media = !bool ? 'none' : '';

|

||||

document.getElementById('light_theme').media = bool ? 'none' : '';

|

||||

|

|

|

|||

4

assets/js/videojs-vtt-thumbnails.min.js

vendored

4

assets/js/videojs-vtt-thumbnails.min.js

vendored

File diff suppressed because one or more lines are too long

|

|

@ -1,3 +1,5 @@

|

|||

var video_data = JSON.parse(document.getElementById('video_data').innerHTML);

|

||||

|

||||

String.prototype.supplant = function (o) {

|

||||

return this.replace(/{([^{}]*)}/g, function (a, b) {

|

||||

var r = o[b];

|

||||

|

|

|

|||

|

|

@ -1,3 +1,5 @@

|

|||

var watched_data = JSON.parse(document.getElementById('watched_data').innerHTML);

|

||||

|

||||

function mark_watched(target) {

|

||||

var tile = target.parentNode.parentNode.parentNode.parentNode.parentNode;

|

||||

tile.style.display = 'none';

|

||||

|

|

|

|||

19

config/migrate-scripts/migrate-db-1eca969.sh

Executable file

19

config/migrate-scripts/migrate-db-1eca969.sh

Executable file

|

|

@ -0,0 +1,19 @@

|

|||

#!/bin/sh

|

||||

|

||||

psql invidious kemal -c "ALTER TABLE videos DROP COLUMN title CASCADE"

|

||||

psql invidious kemal -c "ALTER TABLE videos DROP COLUMN views CASCADE"

|

||||

psql invidious kemal -c "ALTER TABLE videos DROP COLUMN likes CASCADE"

|

||||

psql invidious kemal -c "ALTER TABLE videos DROP COLUMN dislikes CASCADE"

|

||||

psql invidious kemal -c "ALTER TABLE videos DROP COLUMN wilson_score CASCADE"

|

||||

psql invidious kemal -c "ALTER TABLE videos DROP COLUMN published CASCADE"

|

||||

psql invidious kemal -c "ALTER TABLE videos DROP COLUMN description CASCADE"

|

||||

psql invidious kemal -c "ALTER TABLE videos DROP COLUMN language CASCADE"

|

||||

psql invidious kemal -c "ALTER TABLE videos DROP COLUMN author CASCADE"

|

||||

psql invidious kemal -c "ALTER TABLE videos DROP COLUMN ucid CASCADE"

|

||||

psql invidious kemal -c "ALTER TABLE videos DROP COLUMN allowed_regions CASCADE"

|

||||

psql invidious kemal -c "ALTER TABLE videos DROP COLUMN is_family_friendly CASCADE"

|

||||

psql invidious kemal -c "ALTER TABLE videos DROP COLUMN genre CASCADE"

|

||||

psql invidious kemal -c "ALTER TABLE videos DROP COLUMN genre_url CASCADE"

|

||||

psql invidious kemal -c "ALTER TABLE videos DROP COLUMN license CASCADE"

|

||||

psql invidious kemal -c "ALTER TABLE videos DROP COLUMN sub_count_text CASCADE"

|

||||

psql invidious kemal -c "ALTER TABLE videos DROP COLUMN author_thumbnail CASCADE"

|

||||

|

|

@ -1,3 +1,14 @@

|

|||

-- Type: public.privacy

|

||||

|

||||

-- DROP TYPE public.privacy;

|

||||

|

||||

CREATE TYPE public.privacy AS ENUM

|

||||

(

|

||||

'Public',

|

||||

'Unlisted',

|

||||

'Private'

|

||||

);

|

||||

|

||||

-- Table: public.playlists

|

||||

|

||||

-- DROP TABLE public.playlists;

|

||||

|

|

|

|||

|

|

@ -1,10 +0,0 @@

|

|||

-- Type: public.privacy

|

||||

|

||||

-- DROP TYPE public.privacy;

|

||||

|

||||

CREATE TYPE public.privacy AS ENUM

|

||||

(

|

||||

'Public',

|

||||

'Unlisted',

|

||||

'Private'

|

||||

);

|

||||

|

|

@ -7,23 +7,6 @@ CREATE TABLE public.videos

|

|||

id text NOT NULL,

|

||||

info text,

|

||||

updated timestamp with time zone,

|

||||

title text,

|

||||

views bigint,

|

||||

likes integer,

|

||||

dislikes integer,

|

||||

wilson_score double precision,

|

||||

published timestamp with time zone,

|

||||

description text,

|

||||

language text,

|

||||

author text,

|

||||

ucid text,

|

||||

allowed_regions text[],

|

||||

is_family_friendly boolean,

|

||||

genre text,

|

||||

genre_url text,

|

||||

license text,

|

||||

sub_count_text text,

|

||||

author_thumbnail text,

|

||||

CONSTRAINT videos_pkey PRIMARY KEY (id)

|

||||

);

|

||||

|

||||

|

|

|

|||

|

|

@ -1,14 +1,18 @@

|

|||

version: '3'

|

||||

services:

|

||||

postgres:

|

||||

build:

|

||||

context: .

|

||||

dockerfile: docker/Dockerfile.postgres

|

||||

image: postgres:10

|

||||

restart: unless-stopped

|

||||

volumes:

|

||||

- postgresdata:/var/lib/postgresql/data

|

||||

- ./config/sql:/config/sql

|

||||

- ./docker/init-invidious-db.sh:/docker-entrypoint-initdb.d/init-invidious-db.sh

|

||||

environment:

|

||||

POSTGRES_DB: invidious

|

||||

POSTGRES_PASSWORD: kemal

|

||||

POSTGRES_USER: kemal

|

||||

healthcheck:

|

||||

test: ["CMD", "pg_isready", "-U", "postgres"]

|

||||

test: ["CMD", "pg_isready", "-U", "postgres"]

|

||||

invidious:

|

||||

build:

|

||||

context: .

|

||||

|

|

@ -16,6 +20,21 @@ services:

|

|||

restart: unless-stopped

|

||||

ports:

|

||||

- "127.0.0.1:3000:3000"

|

||||

environment:

|

||||

# Adapted from ./config/config.yml

|

||||

INVIDIOUS_CONFIG: |

|

||||

channel_threads: 1

|

||||

check_tables: true

|

||||

feed_threads: 1

|

||||

db:

|

||||

user: kemal

|

||||

password: kemal

|

||||

host: postgres

|

||||

port: 5432

|

||||

dbname: invidious

|

||||

full_refresh: false

|

||||

https_only: false

|

||||

domain:

|

||||

depends_on:

|

||||

- postgres

|

||||

|

||||

|

|

|

|||

|

|

@ -1,27 +1,20 @@

|

|||

FROM alpine:edge AS builder

|

||||

RUN apk add --no-cache crystal shards libc-dev \

|

||||

yaml-dev libxml2-dev sqlite-dev zlib-dev openssl-dev \

|

||||

sqlite-static zlib-static openssl-libs-static

|

||||

FROM crystallang/crystal:0.35.1-alpine AS builder

|

||||

RUN apk add --no-cache curl sqlite-static

|

||||

WORKDIR /invidious

|

||||

COPY ./shard.yml ./shard.yml

|

||||

RUN shards update && shards install

|

||||

RUN apk add --no-cache curl && \

|

||||

curl -Lo /etc/apk/keys/omarroth.rsa.pub https://github.com/omarroth/boringssl-alpine/releases/download/1.1.0-r0/omarroth.rsa.pub && \

|

||||

curl -Lo boringssl-dev.apk https://github.com/omarroth/boringssl-alpine/releases/download/1.1.0-r0/boringssl-dev-1.1.0-r0.apk && \

|

||||

curl -Lo lsquic.apk https://github.com/omarroth/lsquic-alpine/releases/download/2.6.3-r0/lsquic-2.6.3-r0.apk && \

|

||||

tar -xf boringssl-dev.apk && \

|

||||

tar -xf lsquic.apk

|

||||

RUN mv ./usr/lib/libcrypto.a ./lib/lsquic/src/lsquic/ext/libcrypto.a && \

|

||||

mv ./usr/lib/libssl.a ./lib/lsquic/src/lsquic/ext/libssl.a && \

|

||||

mv ./usr/lib/liblsquic.a ./lib/lsquic/src/lsquic/ext/liblsquic.a

|

||||

RUN shards update && shards install && \

|

||||

# TODO: Document build instructions

|

||||

# See https://github.com/omarroth/boringssl-alpine/blob/master/APKBUILD,

|

||||

# https://github.com/omarroth/lsquic-alpine/blob/master/APKBUILD,

|

||||

# https://github.com/omarroth/lsquic.cr/issues/1#issuecomment-631610081

|

||||

# for details building static lib

|

||||

curl -Lo ./lib/lsquic/src/lsquic/ext/liblsquic.a https://omar.yt/lsquic/liblsquic-v2.18.1.a

|

||||

COPY ./src/ ./src/

|

||||

# TODO: .git folder is required for building – this is destructive.

|

||||

# See definition of CURRENT_BRANCH, CURRENT_COMMIT and CURRENT_VERSION.

|

||||

COPY ./.git/ ./.git/

|

||||

RUN crystal build ./src/invidious.cr \

|

||||

--static --warnings all --error-on-warnings \

|

||||

# TODO: Remove next line, see https://github.com/crystal-lang/crystal/issues/7946

|

||||

-Dmusl \

|

||||

--static --warnings all \

|

||||

--link-flags "-lxml2 -llzma"

|

||||

|

||||

FROM alpine:latest

|

||||

|

|

@ -30,10 +23,11 @@ WORKDIR /invidious

|

|||

RUN addgroup -g 1000 -S invidious && \

|

||||

adduser -u 1000 -S invidious -G invidious

|

||||

COPY ./assets/ ./assets/

|

||||

COPY ./config/config.yml ./config/config.yml

|

||||

COPY --chown=invidious ./config/config.yml ./config/config.yml

|

||||

RUN sed -i 's/host: \(127.0.0.1\|localhost\)/host: postgres/' config/config.yml

|

||||

COPY ./config/sql/ ./config/sql/

|

||||

COPY ./locales/ ./locales/

|

||||

RUN sed -i 's/host: \(127.0.0.1\|localhost\)/host: postgres/' config/config.yml

|

||||

COPY --from=builder /invidious/invidious .

|

||||

|

||||

USER invidious

|

||||

CMD [ "/invidious/invidious" ]

|

||||

|

|

|

|||

|

|

@ -1,9 +0,0 @@

|

|||

FROM postgres:10

|

||||

|

||||

ENV POSTGRES_USER postgres

|

||||

|

||||

ADD ./config/sql /config/sql

|

||||

ADD ./docker/entrypoint.postgres.sh /entrypoint.sh

|

||||

|

||||

ENTRYPOINT [ "/entrypoint.sh" ]

|

||||

CMD [ "postgres" ]

|

||||

|

|

@ -1,31 +0,0 @@

|

|||

#!/usr/bin/env bash

|

||||

|

||||

CMD="$@"

|

||||

if [ ! -f /var/lib/postgresql/data/setupFinished ]; then

|

||||

echo "### first run - setting up invidious database"

|

||||

/usr/local/bin/docker-entrypoint.sh postgres &

|

||||

sleep 10

|

||||

until runuser -l postgres -c 'pg_isready' 2>/dev/null; do

|

||||

>&2 echo "### Postgres is unavailable - waiting"

|

||||

sleep 5

|

||||

done

|

||||

>&2 echo "### importing table schemas"

|

||||

su postgres -c 'createdb invidious'

|

||||

su postgres -c 'psql -c "CREATE USER kemal WITH PASSWORD '"'kemal'"'"'

|

||||

su postgres -c 'psql invidious kemal < config/sql/channels.sql'

|

||||

su postgres -c 'psql invidious kemal < config/sql/videos.sql'

|

||||

su postgres -c 'psql invidious kemal < config/sql/channel_videos.sql'

|

||||

su postgres -c 'psql invidious kemal < config/sql/users.sql'

|

||||

su postgres -c 'psql invidious kemal < config/sql/session_ids.sql'

|

||||

su postgres -c 'psql invidious kemal < config/sql/nonces.sql'

|

||||

su postgres -c 'psql invidious kemal < config/sql/annotations.sql'

|

||||

su postgres -c 'psql invidious kemal < config/sql/playlists.sql'

|

||||

su postgres -c 'psql invidious kemal < config/sql/playlist_videos.sql'

|

||||

su postgres -c 'psql invidious kemal < config/sql/privacy.sql'

|

||||

touch /var/lib/postgresql/data/setupFinished

|

||||

echo "### invidious database setup finished"

|

||||

exit

|

||||

fi

|

||||

|

||||

echo "running postgres /usr/local/bin/docker-entrypoint.sh $CMD"

|

||||

exec /usr/local/bin/docker-entrypoint.sh $CMD

|

||||

16

docker/init-invidious-db.sh

Executable file

16

docker/init-invidious-db.sh

Executable file

|

|

@ -0,0 +1,16 @@

|

|||

#!/bin/bash

|

||||

set -eou pipefail

|

||||

|

||||

psql -v ON_ERROR_STOP=1 --username "$POSTGRES_USER" --dbname "$POSTGRES_DB" <<-EOSQL

|

||||

CREATE USER postgres;

|

||||

EOSQL

|

||||

|

||||

psql -v ON_ERROR_STOP=1 --username "$POSTGRES_USER" --dbname "$POSTGRES_DB" < config/sql/channels.sql

|

||||

psql -v ON_ERROR_STOP=1 --username "$POSTGRES_USER" --dbname "$POSTGRES_DB" < config/sql/videos.sql

|

||||

psql -v ON_ERROR_STOP=1 --username "$POSTGRES_USER" --dbname "$POSTGRES_DB" < config/sql/channel_videos.sql

|

||||

psql -v ON_ERROR_STOP=1 --username "$POSTGRES_USER" --dbname "$POSTGRES_DB" < config/sql/users.sql

|

||||

psql -v ON_ERROR_STOP=1 --username "$POSTGRES_USER" --dbname "$POSTGRES_DB" < config/sql/session_ids.sql

|

||||

psql -v ON_ERROR_STOP=1 --username "$POSTGRES_USER" --dbname "$POSTGRES_DB" < config/sql/nonces.sql

|

||||

psql -v ON_ERROR_STOP=1 --username "$POSTGRES_USER" --dbname "$POSTGRES_DB" < config/sql/annotations.sql

|

||||

psql -v ON_ERROR_STOP=1 --username "$POSTGRES_USER" --dbname "$POSTGRES_DB" < config/sql/playlists.sql

|

||||

psql -v ON_ERROR_STOP=1 --username "$POSTGRES_USER" --dbname "$POSTGRES_DB" < config/sql/playlist_videos.sql

|

||||

1

kubernetes/.gitignore

vendored

Normal file

1

kubernetes/.gitignore

vendored

Normal file

|

|

@ -0,0 +1 @@

|

|||

/charts/*.tgz

|

||||

6

kubernetes/Chart.lock

Normal file

6

kubernetes/Chart.lock

Normal file

|

|

@ -0,0 +1,6 @@

|

|||

dependencies:

|

||||

- name: postgresql

|

||||

repository: https://kubernetes-charts.storage.googleapis.com/

|

||||

version: 8.3.0

|

||||

digest: sha256:1feec3c396cbf27573dc201831ccd3376a4a6b58b2e7618ce30a89b8f5d707fd

|

||||

generated: "2020-02-07T13:39:38.624846+01:00"

|

||||

22

kubernetes/Chart.yaml

Normal file

22

kubernetes/Chart.yaml

Normal file

|

|

@ -0,0 +1,22 @@

|

|||

apiVersion: v2

|

||||

name: invidious

|

||||

description: Invidious is an alternative front-end to YouTube

|

||||

version: 1.1.0

|

||||

appVersion: 0.20.1

|

||||

keywords:

|

||||

- youtube

|

||||

- proxy

|

||||

- video

|

||||

- privacy

|

||||

home: https://invidio.us/

|

||||

icon: https://raw.githubusercontent.com/omarroth/invidious/05988c1c49851b7d0094fca16aeaf6382a7f64ab/assets/favicon-32x32.png

|

||||

sources:

|

||||

- https://github.com/omarroth/invidious

|

||||

maintainers:

|

||||

- name: Leon Klingele

|

||||

email: mail@leonklingele.de

|

||||

dependencies:

|

||||

- name: postgresql

|

||||

version: ~8.3.0

|

||||

repository: "https://kubernetes-charts.storage.googleapis.com/"

|

||||

engine: gotpl

|

||||

41

kubernetes/README.md

Normal file

41

kubernetes/README.md

Normal file

|

|

@ -0,0 +1,41 @@

|

|||

# Invidious Helm chart

|

||||

|

||||

Easily deploy Invidious to Kubernetes.

|

||||

|

||||

## Installing Helm chart

|

||||

|

||||

```sh

|

||||

# Build Helm dependencies

|

||||

$ helm dep build

|

||||

|

||||

# Add PostgreSQL init scripts

|

||||

$ kubectl create configmap invidious-postgresql-init \

|

||||

--from-file=../config/sql/channels.sql \

|

||||

--from-file=../config/sql/videos.sql \

|

||||

--from-file=../config/sql/channel_videos.sql \

|

||||

--from-file=../config/sql/users.sql \

|

||||

--from-file=../config/sql/session_ids.sql \

|

||||

--from-file=../config/sql/nonces.sql \

|

||||

--from-file=../config/sql/annotations.sql \

|

||||

--from-file=../config/sql/playlists.sql \

|

||||

--from-file=../config/sql/playlist_videos.sql

|

||||

|

||||

# Install Helm app to your Kubernetes cluster

|

||||

$ helm install invidious ./

|

||||

```

|

||||

|

||||

## Upgrading

|

||||

|

||||

```sh

|

||||

# Upgrading is easy, too!

|

||||

$ helm upgrade invidious ./

|

||||

```

|

||||

|

||||

## Uninstall

|

||||

|

||||

```sh

|

||||

# Get rid of everything (except database)

|

||||

$ helm delete invidious

|

||||

|

||||

# To also delete the database, remove all invidious-postgresql PVCs

|

||||

```

|

||||

16

kubernetes/templates/_helpers.tpl

Normal file

16

kubernetes/templates/_helpers.tpl

Normal file

|

|

@ -0,0 +1,16 @@

|

|||

{{/* vim: set filetype=mustache: */}}

|

||||

{{/*

|

||||

Expand the name of the chart.

|

||||

*/}}

|

||||

{{- define "invidious.name" -}}

|

||||

{{- default .Chart.Name .Values.nameOverride | trunc 63 | trimSuffix "-" -}}

|

||||

{{- end -}}

|

||||

|

||||

{{/*

|

||||

Create a default fully qualified app name.

|

||||

We truncate at 63 chars because some Kubernetes name fields are limited to this (by the DNS naming spec).

|

||||

*/}}

|

||||

{{- define "invidious.fullname" -}}

|

||||

{{- $name := default .Chart.Name .Values.nameOverride -}}

|

||||

{{- printf "%s-%s" .Release.Name $name | trunc 63 | trimSuffix "-" -}}

|

||||

{{- end -}}

|

||||

11

kubernetes/templates/configmap.yaml

Normal file

11

kubernetes/templates/configmap.yaml

Normal file

|

|

@ -0,0 +1,11 @@

|

|||

apiVersion: v1

|

||||

kind: ConfigMap

|

||||

metadata:

|

||||

name: {{ template "invidious.fullname" . }}

|

||||

labels:

|

||||

app: {{ template "invidious.name" . }}

|

||||

chart: "{{ .Chart.Name }}-{{ .Chart.Version }}"

|

||||

release: {{ .Release.Name }}

|

||||

data:

|

||||

INVIDIOUS_CONFIG: |

|

||||

{{ toYaml .Values.config | indent 4 }}

|

||||

61

kubernetes/templates/deployment.yaml

Normal file

61

kubernetes/templates/deployment.yaml

Normal file

|

|

@ -0,0 +1,61 @@

|

|||

apiVersion: apps/v1

|

||||

kind: Deployment

|

||||

metadata:

|

||||

name: {{ template "invidious.fullname" . }}

|

||||

labels:

|

||||

app: {{ template "invidious.name" . }}

|

||||

chart: "{{ .Chart.Name }}-{{ .Chart.Version }}"

|

||||

release: {{ .Release.Name }}

|

||||

spec:

|

||||

replicas: {{ .Values.replicaCount }}

|

||||

selector:

|

||||

matchLabels:

|

||||

app: {{ template "invidious.name" . }}

|

||||

release: {{ .Release.Name }}

|

||||

template:

|

||||

metadata:

|

||||

labels:

|

||||

app: {{ template "invidious.name" . }}

|

||||

chart: "{{ .Chart.Name }}-{{ .Chart.Version }}"

|

||||

release: {{ .Release.Name }}

|

||||

spec:

|

||||

securityContext:

|

||||

runAsUser: {{ .Values.securityContext.runAsUser }}

|

||||

runAsGroup: {{ .Values.securityContext.runAsGroup }}

|

||||

fsGroup: {{ .Values.securityContext.fsGroup }}

|

||||

initContainers:

|

||||

- name: wait-for-postgresql

|

||||

image: postgres

|

||||

args:

|

||||

- /bin/sh

|

||||

- -c

|

||||

- until pg_isready -h {{ .Values.config.db.host }} -p {{ .Values.config.db.port }} -U {{ .Values.config.db.user }}; do echo waiting for database; sleep 2; done;

|

||||

containers:

|

||||

- name: {{ .Chart.Name }}

|

||||

image: "{{ .Values.image.repository }}:{{ .Values.image.tag }}"

|

||||

imagePullPolicy: {{ .Values.image.pullPolicy }}

|

||||

ports:

|

||||

- containerPort: 3000

|

||||

env:

|

||||

- name: INVIDIOUS_CONFIG

|

||||

valueFrom:

|

||||

configMapKeyRef:

|

||||

key: INVIDIOUS_CONFIG

|

||||

name: {{ template "invidious.fullname" . }}

|

||||

securityContext:

|

||||

allowPrivilegeEscalation: {{ .Values.securityContext.allowPrivilegeEscalation }}

|

||||

capabilities:

|

||||

drop:

|

||||

- ALL

|

||||

resources:

|

||||

{{ toYaml .Values.resources | indent 10 }}

|

||||

readinessProbe:

|

||||

httpGet:

|

||||

port: 3000

|

||||

path: /

|

||||

livenessProbe:

|

||||

httpGet:

|

||||

port: 3000

|

||||

path: /

|

||||

initialDelaySeconds: 15

|

||||

restartPolicy: Always

|

||||

18

kubernetes/templates/hpa.yaml

Normal file

18

kubernetes/templates/hpa.yaml

Normal file

|

|

@ -0,0 +1,18 @@

|

|||

{{- if .Values.autoscaling.enabled }}

|

||||

apiVersion: autoscaling/v1

|

||||

kind: HorizontalPodAutoscaler

|

||||

metadata:

|

||||

name: {{ template "invidious.fullname" . }}

|

||||

labels:

|

||||

app: {{ template "invidious.name" . }}

|

||||

chart: "{{ .Chart.Name }}-{{ .Chart.Version }}"

|

||||

release: {{ .Release.Name }}

|

||||

spec:

|

||||

scaleTargetRef:

|

||||

apiVersion: apps/v1

|

||||

kind: Deployment

|

||||

name: {{ template "invidious.fullname" . }}

|

||||

minReplicas: {{ .Values.autoscaling.minReplicas }}

|

||||

maxReplicas: {{ .Values.autoscaling.maxReplicas }}

|

||||

targetCPUUtilizationPercentage: {{ .Values.autoscaling.targetCPUUtilizationPercentage }}

|

||||

{{- end }}

|

||||

20

kubernetes/templates/service.yaml

Normal file

20

kubernetes/templates/service.yaml

Normal file

|

|

@ -0,0 +1,20 @@

|

|||

apiVersion: v1

|

||||

kind: Service

|

||||

metadata:

|

||||

name: {{ template "invidious.fullname" . }}

|

||||

labels:

|

||||

app: {{ template "invidious.name" . }}

|

||||

chart: {{ .Chart.Name }}

|

||||

release: {{ .Release.Name }}

|

||||

spec:

|

||||

type: {{ .Values.service.type }}

|

||||

ports:

|

||||

- name: http

|

||||

port: {{ .Values.service.port }}

|

||||

targetPort: 3000

|

||||

selector:

|

||||

app: {{ template "invidious.name" . }}

|

||||

release: {{ .Release.Name }}

|

||||

{{- if .Values.service.loadBalancerIP }}

|

||||

loadBalancerIP: {{ .Values.service.loadBalancerIP }}

|

||||

{{- end }}

|

||||

56

kubernetes/values.yaml

Normal file

56

kubernetes/values.yaml

Normal file

|

|

@ -0,0 +1,56 @@

|

|||

name: invidious

|

||||

|

||||

image:

|

||||

repository: omarroth/invidious

|

||||

tag: latest

|

||||

pullPolicy: Always

|

||||

|

||||

replicaCount: 1

|

||||

|

||||

autoscaling:

|

||||

enabled: false

|

||||

minReplicas: 1

|

||||

maxReplicas: 16

|

||||

targetCPUUtilizationPercentage: 50

|

||||

|

||||

service:

|

||||

type: clusterIP

|

||||

port: 3000

|

||||

#loadBalancerIP:

|

||||

|

||||

resources: {}

|

||||

#requests:

|

||||

# cpu: 100m

|

||||

# memory: 64Mi

|

||||

#limits:

|

||||

# cpu: 800m

|

||||

# memory: 512Mi

|

||||

|

||||

securityContext:

|

||||

allowPrivilegeEscalation: false

|

||||

runAsUser: 1000

|

||||

runAsGroup: 1000

|

||||

fsGroup: 1000

|

||||

|

||||

# See https://github.com/helm/charts/tree/master/stable/postgresql

|

||||

postgresql:

|

||||

postgresqlUsername: kemal

|

||||

postgresqlPassword: kemal

|

||||

postgresqlDatabase: invidious

|

||||

initdbUsername: kemal

|

||||

initdbPassword: kemal

|

||||

initdbScriptsConfigMap: invidious-postgresql-init

|

||||

|

||||

# Adapted from ../config/config.yml

|

||||

config:

|

||||

channel_threads: 1

|

||||

feed_threads: 1

|

||||

db:

|

||||

user: kemal

|

||||

password: kemal

|

||||

host: invidious-postgresql

|

||||

port: 5432

|

||||

dbname: invidious

|

||||

full_refresh: false

|

||||

https_only: false

|

||||

domain:

|

||||

|

|

@ -333,4 +333,4 @@

|

|||

"Playlists": "قوائم التشغيل",

|

||||

"Community": "المجتمع",

|

||||

"Current version: ": "الإصدار الحالي: "

|

||||

}

|

||||

}

|

||||

|

|

@ -1,7 +1,7 @@

|

|||

{

|

||||

"`x` subscribers": "`x` Abonnenten",

|

||||

"`x` videos": "`x` Videos",

|

||||

"`x` playlists": "",

|

||||

"`x` playlists": "`x` Wiedergabelisten",

|

||||

"LIVE": "LIVE",

|

||||

"Shared `x` ago": "Vor `x` geteilt",

|

||||

"Unsubscribe": "Abbestellen",

|

||||

|

|

@ -127,17 +127,17 @@

|

|||

"View JavaScript license information.": "Javascript Lizenzinformationen anzeigen.",

|

||||

"View privacy policy.": "Datenschutzerklärung einsehen.",

|

||||

"Trending": "Trending",

|

||||

"Public": "",

|

||||

"Public": "Öffentlich",

|

||||

"Unlisted": "Nicht aufgeführt",

|

||||

"Private": "",

|

||||

"View all playlists": "",

|

||||

"Updated `x` ago": "",

|

||||

"Delete playlist `x`?": "",

|

||||

"Delete playlist": "",

|

||||

"Create playlist": "",

|

||||

"Title": "",

|

||||

"Playlist privacy": "",

|

||||

"Editing playlist `x`": "",

|

||||

"Private": "Privat",

|

||||

"View all playlists": "Alle Wiedergabelisten anzeigen",

|

||||

"Updated `x` ago": "Aktualisiert `x` vor",

|

||||

"Delete playlist `x`?": "Wiedergabeliste löschen `x`?",

|

||||

"Delete playlist": "Wiedergabeliste löschen",

|

||||

"Create playlist": "Wiedergabeliste erstellen",

|

||||

"Title": "Titel",

|

||||

"Playlist privacy": "Vertrauliche Wiedergabeliste",

|

||||

"Editing playlist `x`": "Wiedergabeliste bearbeiten `x`",

|

||||

"Watch on YouTube": "Video auf YouTube ansehen",

|

||||

"Hide annotations": "Anmerkungen ausblenden",

|

||||

"Show annotations": "Anmerkungen anzeigen",

|

||||

|

|

|

|||

|

|

@ -8,7 +8,7 @@

|

|||

"": "`x` videos"

|

||||

},

|

||||

"`x` playlists": {

|

||||

"(\\D|^)1(\\D|$)": "`x` playlist",

|

||||

"([^.,0-9]|^)1([^.,0-9]|$)": "`x` playlist",

|

||||

"": "`x` playlists"

|

||||

},

|

||||

"LIVE": "LIVE",

|

||||

|

|

@ -177,7 +177,7 @@

|

|||